Data.gov launches metrics tools

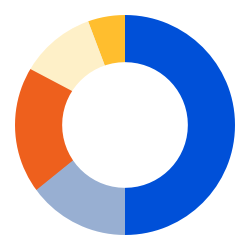

New Data.gov metrics dashboard provides information on most visited datasets, most downloaded files, most clicked outbound links, top search terms, and more.

New Data.gov metrics dashboard provides information on most visited datasets, most downloaded files, most clicked outbound links, top search terms, and more.

A 10-year recap of GSA’s Digital Analytics Program (DAP) highlights its impact on federal government agencies and the public, high-level observations using government-wide website analytics and trends, and new goals set for the next decade to help generate useful answers to meaningful questions to make government websites better.

The past year was like no other. We take a look at how it affected federal website traffic and search data.

Become a DAP Certified Analyst—The Digital Analytics Program (DAP) now offers the opportunity to become a DAP Certified Analyst. Prospective analysts must complete the DAP Certification Exam with a score of 80 percent or better. The exam is 50 questions and is multiple choice. Prospective analysts can take the exam more than once.— via Digital Analytics Program

Making Critical Government Information More Resilient—A roundup of steps that federal agencies, and other government entities, can take right now to improve the resilience of their websites and serve information more efficiently to the people that need it.— via 18F

Digital.gov

An official website of the U.S. General Services Administration